Nature’s round-up of innovations that are poised to make a splash in the year ahead.

Michael Eisenstein

From quantum computing and mRNA therapeutics to artificial-intelligence-powered climate modelling, here are seven technologies that Nature will be keeping its eye on.

Xenotransplantation

Every day, around two dozen people die awaiting an organ transplant in the 46 countries of the Council of Europe, together with 13 in the United States. The real toll might be higher still: according to Alexandre Loupy, a nephrologist at Necker Hospital in Paris, “many patients with terminal organ failure are not even wait-listed”.

Xenotransplantation — replacing damaged human tissues with counterparts from closely related animal species — offers a tantalizing alternative to precious human organs. But such transplants tend to fail quickly, with the remarkable exception of one woman who survived nine months after receiving a chimpanzee kidney in 1964.

The problem is immune rejection. Pig cells, for instance, are coated with a carbohydrate, called alpha-gal, that triggers a strong immune reaction in humans, who lack this molecule. Precision genome editing with CRISPR–Cas9 has given scientists an effective tool for eliminating this and other sources of rejection and, in combination with next-generation immunosuppressants, this tool is hugely improving patient outcomes.

In 2024, clinicians at Massachusetts General Hospital in Boston teamed up with xenotransplantation company eGenesis in Cambridge, Massachusetts, to perform the first transplant of a pig kidney into a living person1. The kidney came from an animal with 69 genomic modifications to knock out immunity-triggering antigens and dormant viral sequences while also inserting human genes that reduce inflammation and prevent abnormal blood clotting. The patient survived for 52 days before dying from unrelated cardiac issues. Subsequent pig-kidney recipients in the United States and China remained stable for more than eight months before returning to dialysis — nearly matching 1964’s durability record.

The first transplant of an engineered pig heart into a person, in 2022.Credit: University Of Maryland School Of Medicine/ZUMA/Alamy

And it’s not just kidneys. In 2022, Muhammad Mohiuddin, a surgeon at the University of Maryland School of Medicine in Baltimore, and his colleagues described the first transplant of an engineered pig heart into a person, who survived for 60 days after surgery2. And in 2025, teams in China reported xenotransplantation of pig liver3 and even lung4 into people who had been declared brain dead — a key step towards working with recipients capable of recovery.

Even a transient xenograft could buy precious time for patients awaiting a human donor, but with a deeper understanding of individual determinants of transplant rejection, these substitutes could become a long-term solution, says Leonardo Riella, chair of transplantation at Massachusetts General Hospital and one of the leads on the 2024 kidney transplant. “The xenotransplant really permits us to think outside the box and personalize that kidney and make it invisible to your immune system,” he says.

AI-powered meteorology

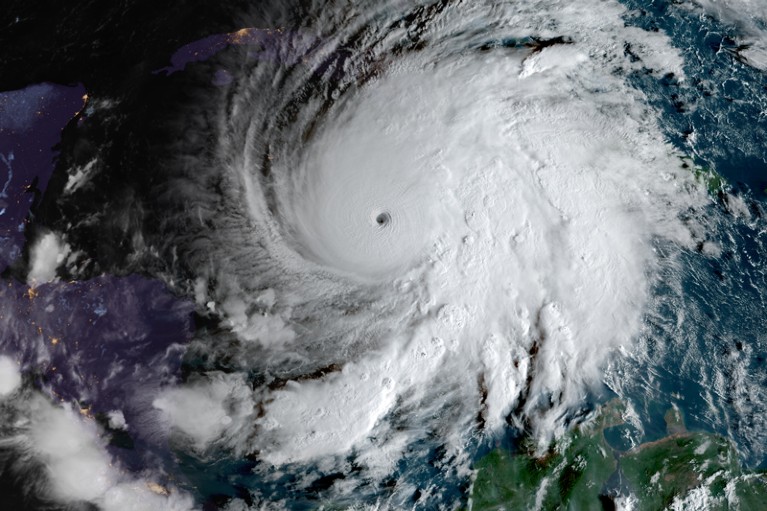

In October 2025, an AI model from Google DeepMind in London gave the US National Hurricane Center an early warning about the serious threat posed by Hurricane Melissa. The model anticipated the storm’s evolution to category-5 intensity days in advance and accurately predicted its trajectory across the Caribbean, whereas older models fell short.

Self-driving laboratories, advanced immunotherapies and five more technologies to watch in 2025

That success is just one example of how AI methods are accelerating and improving local weather forecasting, storm tracking and even global climate modelling, with ever-more-sophisticated models rapidly emerging.

In some ways, this is an ideal arena for AI. Earth and atmospheric researchers have tons of data at their fingertips. But wrangling those data into a forecast has historically relied on using sophisticated and computationally intensive numerical weather-prediction models to crunch through complex differential equations. “They involve literally millions of lines of code and a large team to run them,” says Richard Turner, a machine-learning researcher at the University of Cambridge, UK. But over the past three years, promising AI models have begun to emerge, including Pangu-Weather, from Huawei Cloud in Shenzhen, China, which used deep learning to accelerate forecasting up to 10,000-fold relative to existing methods5.

AI models are advancing weather forecasting.Credit: CSU/CIRA & NOAA

Most models tackle only part of the forecasting workflow. But in 2025, Turner and his colleagues published Aardvark, an ‘end-to-end’ model6 that was trained to ingest raw data from sources including weather stations and satellites, and deliver localized forecasts up to ten days ahead. “We could literally run it off a desktop machine in an office,” says Turner, who notes that Aardvark’s accuracy was competitive with existing systems, and occasionally outperformed them.

Turner has also collaborated with Microsoft Research in Amsterdam to develop an AI ‘foundation model’ called Aurora, which could accurately predict meteorological events that fall beyond standard weather forecasts, such as cyclone trajectories and air-quality trends7.

Other AI models are even more ambitious, incorporating global insights from Earth features such as the atmosphere, sea and polar ice to analyse the current climate and predict future changes. For example, in September, engineer James Duncan at the Allen Institute for Artificial Intelligence in Seattle, Washington, and his colleagues described SamudrACE, which integrates AI models of the atmosphere and ocean and can simulate the behaviour of those systems over more than a millennium8.

AI models put simulation projects that were previously limited to supercomputing facilities into the hands of everyday researchers, says Elizabeth Barnes, an environmental data scientist at Boston University, Massachusetts. “All these science questions I used to have to pass off to other groups, I can do myself now,” she says.

Next-generation nuclear power

Surging investment in AI is creating a commensurate spike in demand for electrical power. The International Energy Agency in Paris predicts that global energy demand from data centres could increase by 15% annually between now and 2030.

Even if the AI boom goes bust, there remains an urgent need to bolster energy grids with climate-friendly power sources, says Jonas Kristiansen Nøland, an energy-systems researcher at the Norwegian University of Science and Technology in Trondheim.

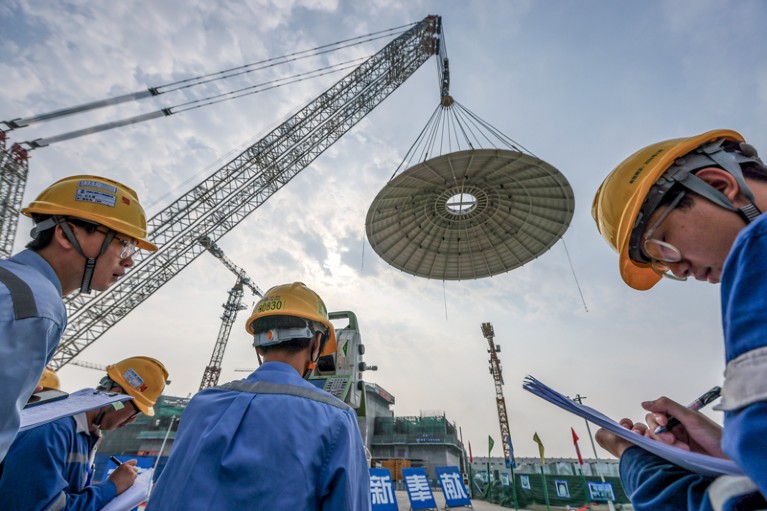

These conditions present a ripe opportunity for the resurgence of nuclear energy, and Nøland is particularly optimistic about small modular reactors (SMRs) — nuclear facilities that produce up to 500 megawatts of power. That’s less than half the output of a standard fission reactor, but sufficient to power hundreds of thousands of homes.

Small Modular Reactors, such as the Linglong One in China, offer a low-cost, fast-to-build option for nuclear energy generation.Credit: Luo Yunfei/China News Service/VCG/Getty

Russia and China already have active SMRs, and at least 100 projects are now under consideration or development worldwide. The most advanced — including one at Canada’s Darlington nuclear facility in Ontario, scheduled to come online in 2029 — are based on similar designs as full-scale fission reactors. But next-generation systems are also under development. Nuclear-power company TerraPower in Bellevue, Washington, for example, is pursuing molten-salt reactors, a fuel-efficient design that could greatly reduce nuclear waste and store heat produced during reactor operation for later use as thermal power.

Meanwhile, after decades of hype as the ‘technology of the future’, fusion power is nearing reality. In 2022, the Lawrence Livermore National Laboratory achieved the first demonstration of net energy production from fusion at its National Ignition Facility in Livermore, California. And in 2023, the Joint European Torus near Oxford, UK, set a world record for power production, generating enough energy in five seconds to power 12,000 homes. Meanwhile, Germany’s Wendelstein 7-X facility in Greifswald achieved an endurance record of 43 seconds of sustained operation, showcasing an alternative reactor design that could enable more stable operation than first-generation ‘tokamak’ designs.

US nuclear-fusion lab enters new era: achieving ‘ignition’ over and over

Sibylle Günter, a physicist at the Max Planck Institute for Plasma Physics in Garching, Germany, says that these developments are particularly exciting for countries that crave clean energy but are reluctant to engage with nuclear fission — including Germany, which plans to invest €2 billion (US$2.3 billion) in fusion by 2029. She notes that more than 50 fusion-oriented start-up companies are active worldwide, including Commonwealth Fusion Systems in Devens, Massachusetts, which aims to complete construction of a demonstration reactor this year.

Still, the world won’t be running on fusion any time soon. Between fuel production and regulatory and engineering challenges, it could be 20 years before the first commercial reactors come online, Günter notes. But balanced against cheap, safe and abundant power, she says, “20 years is not long”.

Light-microscopy brain mapping

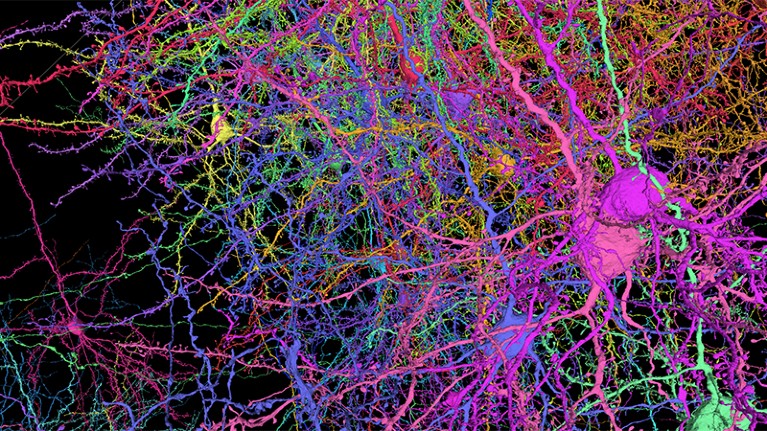

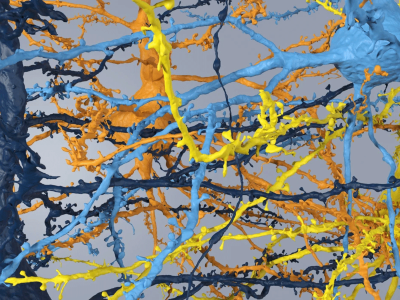

With its capacity to image molecular-scale details precisely, electron microscopy has been the tool of choice for mapping the labyrinthine circuitry of the mammalian brain. Reconstructions of cubic-millimetre-scale volumes of mouse and human brains published in 2024 by the Machine Intelligence from Cortical Networks (MICrONS) consortium and a collaborative effort between Harvard University in Cambridge, Massachusetts, and Google Research in Mountain View, California, respectively (see go.nature.com/4pI6dpe), offer clear testament to electron microscopy’s utility as a powerful tool for connectomics.

Neural connections can be imaged precisely.Credit: Allen Institute

But it’s one thing to map connectivity, and another to interpret it. “You need to differentiate which cells and synapses are there,” says Johann Danzl, an imaging specialist at the Institute of Science and Technology Austria in Klosterneuburg. “Are they excitatory? Are they inhibitory? And then — going more into depth — which types of neurotransmitters are there?”

In May 2025, Danzl’s team described a method for extracting such information. In light-microscopy-based connectomics (LICONN)9, brain samples are subjected to multiple rounds of expansion microscopy — a process in which tissue is chemically trapped in a hydrogel that expands evenly in all directions, separating the sample’s constituent biomolecules and rendering it transparent. Using protein-specific labels, researchers can visualize nanoscale details of cellular structure and organization with a standard confocal microscope while preserving the tissue’s underlying organization. In this way, Danzl’s team could map the twisty trails of axons and dendrites, as well as categorizing the synapses that they form and classifying the cells involved.

E11 Bio, a non-profit company in Alameda, California, focused on optical connectomics, has addressed another pain point for electron-microscopy-based mapping: proofreading. “If you look at that MICrONS volume, it’s beautiful,” says Andrew Payne, the company’s co-founder and chief executive. But only about “1% of the cells were ultimately reconstructed; the other 99% are not reconstructed to this day”.

A milestone map of mouse-brain connectivity reveals challenging new terrain for scientists

In a preprint from September 2025, Payne and his colleagues describe an approach in which they genetically modified mouse neurons to express various combinations of short protein epitopes, such that each cell displays a distinct barcode10. These barcodes can then be decoded by sequential staining with fluorescently labelled antibodies, enabling essentially error-free computational mapping and tracing of each neuron in the sample. Using 18 epitopes, the company could resolve some 262,000 barcodes, and Payne says that the technology should be sufficiently scalable to map connectivity across the entire mouse brain.

It’ll take other advances to make that goal practical, Danzl notes, including more-efficient sample handling and faster imaging. But by slashing proofreading expenses and replacing pricey electron microscopes with readily available confocals, these methods could put mammalian connectomes in closer reach.

Exploring the extremes

Scientists excel at discovering limits — and then pushing beyond them.

First images from world’s largest digital camera leave astronomers in awe

Last June, the US National Science Foundation and Department of Energy released early images from the Vera C. Rubin Observatory. Named after the US astronomer who first provided proof of the existence of dark matter, this groundbreaking facility in the Chilean Andes will, over a ten-year span, collect measurements from each point in the southern sky roughly 800 times. Making use of an innovative multi-mirror design and a massive 3.2 gigapixel digital camera, that scan will yield an authoritative catalogue of celestial objects and how they change over time. “We estimate we’ll have about 20 billion galaxies and close to that number of stars,” says Željko Ivezić, an astrophysicist at the University of Washington in Seattle. “We’ll have more celestial objects catalogued than living people on Earth, and for each of them, we will measure many parameters.”

Thousands of scientists from more than 30 countries have already queued up to use the observatory’s data, which should start rolling out in early 2028. Ivezić anticipates that the facility will tackle questions ranging from surveying asteroids that pose a potential threat to Earth, to proving (or rejecting) the existence of the enigmatic ‘dark energy’ that astronomers have implicated in the accelerating expansion of the Universe.

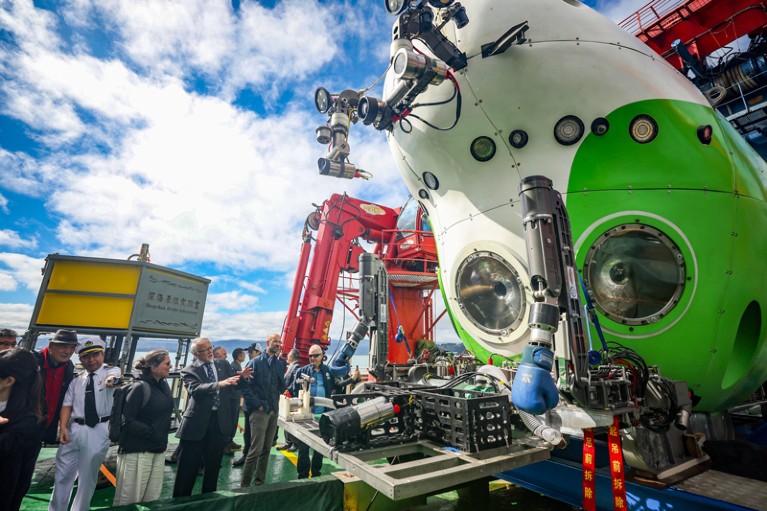

Closer to home, Haibin Zhang, a marine biologist at the Chinese Academy of Sciences in Sanya, has built a career studying life in the ocean’s hadal zone. Located 6 kilometres and more below sea level, this region is home to diverse, deeply weird organisms. But conducting crewed missions at that depth has proved challenging, and most biological samples are collected by trawling the sea bottom with nets. “All of these animals are dead when they are recovered,” says Zhang. “And for some collections, we cannot know exactly where the samples came from.”

Scientists have travelled to the deepest part of the ocean in the submersible Fendouzhe.Credit: Long Lei/Xinhua/Alamy

For the past three years, Zhang has been conducting expeditions on an innovative submersible called Fendouzhe. Fendouzhe — Mandarin for ‘striver’ — was built from a durable, pressure-resistant titanium alloy and equipped with a sophisticated sample-collection arm that can operate more than 10 km down. Zhang has already visited the floor of the Mariana Trench, the deepest part of the ocean, and his team is now surveying the rest of Earth’s hadal depths as part of China’s ongoing Global Trench Exploration and Diving initiative.

These expeditions have been transformative, Zhang says. For one 2025 study, for example, he and his colleagues profiled the population genetics of an unusual family of crustaceans known as amphipods that thrive in the hadal zone11. “It’s a very exciting experience,” he says: “I actually saw the animals I research alive.”

mRNA therapeutics

Messenger RNA entered the clinic at the vanguard of a wave of fast-turnaround vaccines against the COVID-19 pandemic-causing virus SARS-CoV-2. By one estimate, these vaccines have saved at least 2.5 million lives12. Unfortunately, last August, the administration of US President Donald Trump slashed nearly $500 million in federal funding for mRNA vaccine programmes, raising dubious concerns about the technology’s safety.

The reality is that mRNA-based vaccines and therapies continue to show ever-greater promise. Their ease and low cost of design and manufacture, combined with their fleeting presence in the body, have attracted clinical researchers across domains. “The idea that you could manipulate the human ‘software’ by introducing information in the form of these breakthrough mRNA technologies has so much capacity for changing the world,” says Elias Sayour, a paediatric oncologist at the University of Florida, Gainesville.

Cancelling mRNA studies is the highest irresponsibility

Such mRNA-based vaccines are particularly promising in oncology, in which they are being applied as therapies rather than prophylaxis. For example, last February, a US team showed that custom mRNA vaccines encoding tumour-specific antigens could extend — by years — the recurrence-free survival of patients receiving immunotherapy for pancreatic cancer13.

Such mRNA vaccines could even combat cancer without using tumour-specific antigens. In October 2025, Sayour and radiation oncologist Steven Lin at the MD Anderson Cancer Center in Houston, Texas, reported a retrospective analysis of 180 people with cancer. They found that the duration of overall survival was nearly doubled in those who had received mRNA-based COVID-19 vaccines within 100 days of beginning antitumour immunotherapy versus those who had not14. Sayour and his colleagues aim to test this idea directly in a clinical trial in the coming months.

Meanwhile, researchers at the University of Pennsylvania in Philadelphia showed in 2022 that they could use mRNA to reprogram immune cells in vivo to express chimeric antigen receptor (CAR) proteins15, enabling those cells to rapidly target and eliminate disease-promoting cells and proteins in animal models. Normally, CAR-T therapy requires a gruelling regimen of bone-marrow collection, stem-cell engineering and transplantation; an in vivo approach could make such treatment more accessible without compromising performance. “In humanized mouse studies and non-human primates, the efficacy was really nice,” says Hamideh Parhiz, a drug-delivery specialist who co-led the study.

CAR T cells are used mainly to treat cancer and, more recently, autoimmune disorders, but Parhiz and her colleagues have been testing their ability to block the formation of organ-killing scar tissue in fibrotic disorders. Her team has also demonstrated in vivo mRNA reprogramming of blood stem cells — a potential gateway to directly treating numerous genetic disorders by replacing missing or defective proteins.

Can this remarkable potential help mRNA therapeutics to overcome political hostility? Sayour hopes so. “The technology has been demonized,” he says. “Why would we dismiss something that exciting? It’s stunning to me.”

Quantum computing

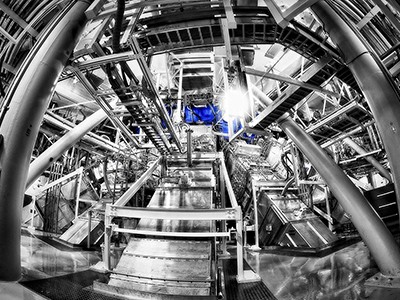

In theory, quantum computing enables simulations of complex scientific phenomena that surpass the capabilities of classical computers, but researchers have struggled to bring these systems into the real world. One big obstacle is error-checking. Classical computers rely on redundant digital bits to assess the fidelity of their data, but that isn’t possible with quantum computers: quantum data cannot be copied directly, and the act of measuring a ‘qubit’ effectively destroys its quantum state.

Existing solutions require multiple fact-checking qubits for every data-processing ‘logical qubit’. In a worst-case scenario, practical computing tasks could require millions or billions of qubits — whereas current designs incorporate at most a few thousand. “Even four or five years ago, if you had asked anyone: ‘will we be doing meaningful quantum error-correction at scale on any of these processors?’, a lot of people would’ve just laughed you out of the room,” says Nathalie de Leon, a physicist at Princeton University in New Jersey.

Nobody’s laughing now. In 2023, researchers at Google Quantum AI in Santa Barbara, California, reported the first successful demonstration of an error-proofed logical qubit on their superconductor-based platform16. The utility of that system was limited by the short lifespan of its qubits, which create noise as they degrade and undermine error-correction, but de Leon and her Princeton colleagues Andrew Houck and Robert Cava have developed strategies for extending qubit longevity considerably.

In 2021, their team effectively tripled qubit lifespan to more than 300 microseconds with a tantalum-based superconductor formulation that can be cleaned more rigorously to eliminate performance-degrading contaminants17. And in November, by systematically evaluating the fabrication process, de Leon and Houck devised a workflow that pushed qubit lifetime above 1.6 milliseconds18. De Leon is now working with Google to improve its ‘Willow’ quantum processor. She is also working to push qubit lifetime by another factor of ten; if successful, working processors could realistically be built using just 30,000 qubits, she says. “That actually feels inevitable.”

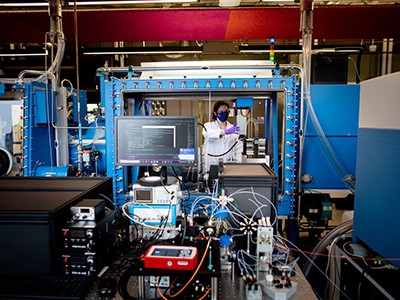

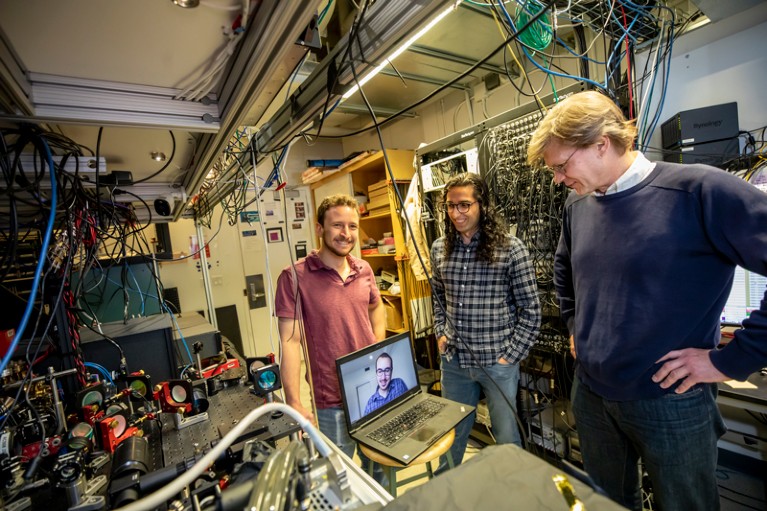

Mikhail Lukin (right) and his team developed a neutral atom quantum computer, on the left.Credit: Rose Lincoln/Harvard Staff Photographer (CC BY)

Until recently, the most advanced quantum systems — such as Google’s — were built using superconductors. But an alternative approach, in which the qubits are provided by large arrays of neutral atoms, has gained ground. Although slower than superconductors, neutral-atom qubits are easier to assemble and manipulate at scale, and physicist Mikhail Lukin’s team at Harvard University, has led the push to make such systems practical.

Last November, Lukin and his colleagues presented a ‘universal’ design for a neutral-atom-based processor with robust error-correction capabilities19. That system featured just 448 qubits, but Lukin’s team has also showcased a 3,000-qubit processor that can run for hours20. He says that these techniques will “definitely scale” to tens of thousands of atoms, and probably to hundreds of thousands. “The challenge now is to really control and make meaningful circuits out of them.” Lukin co-founded QuEra in Cambridge, Massachusetts, to commercialize these technologies, and a practical system could be just a few years away, he says.

Then, the real work begins: working out what quantum computing can truly deliver, and where it outperforms existing technology. “These are really new kinds of instruments — by some measures, they’re not even computers,” says Lukin. “What’s really exciting is that these systems are now working already at a reasonable scale and we can start experimenting with them to figure out what we can do with them.”

doi: https://doi.org/10.1038/d41586-026-00188-6

References

-

Kawai, T. et al. N. Engl. J. Med. 392, 1933–1940 (2025).

-

Griffith, B. P. et al. N. Engl. J. Med. 387, 35–44 (2022).

-

Tao, K. S. et al. Nature 641, 1029–1036 (2025).

-

He, J. et al. Nature Med. 31, 3388–3393 (2025).

-

Bi, K. et al. Nature 619, 533–538 (2023).

-

Allen, A. et al. Nature 641, 1172–1179 (2025).

-

Bodnar, C. et al. Nature 641, 1180–1187 (2025).

-

Duncan, J. P. C. et al. Preprint at arXiv https://doi.org/10.48550/arXiv.2509.12490 (2025).

-

Tavakoli, M. R. et al. Nature 642, 398–410 (2025).

-

Park, S. Y. et al. Preprint at bioRxiv https://doi.org/10.1101/2025.09.26.678648 (2025).

-

Zhang, H. et al. Cell 188, 1378–1392 (2025).

-

Ioannidis, J. P. A., Pezzullo, A. M., Cristiano, A. & Boccia, S. JAMA Health Forum 6, e252223 (2025).

-

Sethna, Z. et al. Nature 639, 1042–1051 (2025).

-

Grippin, A. J. et al. Nature 647, 488–497 (2025).

-

Rurik, J. G. et al. Science 375, 91–96 (2022).

-

Google Quantum AI. Nature 614, 676–681 (2023).

-

Place, A. P. M. et al. Nature Commun. 12, 1779 (2021).

-

Bland, M. P. et al. Nature 647, 343–348 (2025).

-

Bluvstein, D. et al. Nature 649, 39–46 (2026).

-

Chiu, N.-C. et al. Nature 646, 1075–1080 (2025).